Rethinking Reliability: Advancing Fairness and Precision in Student Assessments

Sharper Tools for Uneven Testing Ground

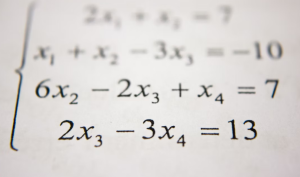

This week, a compelling study in Educational and Psychological Measurement reveals a breakthrough in detecting bias in student assessments, known as Differential Item Functioning (DIF). The researchers questioned the reliability of traditional p-value methods, widely used to flag items that unfairly favor certain groups of students. These classical approaches tend to struggle when test assumptions are violated—a common occurrence when tests assess multiple skills or questions cluster together in “testlets.” Through sophisticated simulations, the study champions e-values, a newer statistical tool that controls false positives more effectively, especially as sample sizes grow large. This means that educators and psychologists can better trust which items truly need review or removal, enhancing fairness in high-stakes testing settings.

Implications for Special Populations

The impact of this research extends directly to special education populations, where test precision is paramount. Because students with disabilities often have diverse cognitive profiles, assessments can unintentionally become multidimensional or locally dependent. These conditions can undermine traditional DIF detection and obscure true differences in ability. By demonstrating that e-value methods maintain robust error control even under such challenging conditions, the study offers tangible hope for more equitable measurement practices. For school psychologists involved in evaluation, this means stronger evidence for validity and fairness when interpreting student data, which informs vital decisions about accommodations and services.

A Step Toward Evidence-Based Educational Practice

Beyond technical improvements, this work underscores a broader shift toward evidence-based practice in education measurement. Using data from the Progress in International Reading Literacy Study (PIRLS), the investigators showed that e-value procedures yield a more defensible set of flagged items—one less prone to false alarms. Educators and policymakers rely on test data to drive instruction, allocate resources, and support systemic change. Improving the accuracy of these signals reduces the risk of misguided interventions or missed opportunities for support. As educational systems evolve, integrating such robust statistical frameworks will be critical to sustaining equitable and effective practices.

As school psychology continues to intertwine research and daily practice, staying abreast of innovations like e-value methods is vital. They remind us that the science behind our assessments must evolve to meet the complexities of real-world classrooms. Keep following this newsletter for more evidence-based insights that empower you to navigate and lead positive change in student mental health, special education, and school success.